AI FOR REAL LIFE

Motion Control Changes the Game

Kling 3.0 Motion Control stress-tested for real production. Here's what worked, and what still breaks.

All right, so this week I want to break down something I’ve been testing that I think is genuinely one of the more significant updates we’ve seen in AI video this year. And I also want to catch you up on a few other things that dropped in the last two weeks.

Let’s start with the main thing.

Kling 3.0 Motion Control: What It Actually Is

Kling dropped Motion Control for their 3.0 model on March 4th, and the concept is straightforward: you give it a still image of a character and a reference video showing a motion, and it transfers that motion onto your character.

What This Means for Your Workflow

Here’s the thing about Motion Control. It’s not a magic button. The quality of your output is directly tied to the quality of your reference video.

What Else Dropped: Cinema Studio 2.5

Two weeks after Kling’s Motion Control launch, Higgsfield dropped Cinema Studio 2.5 on March 18th. Worth paying attention to if you’ve been using Higgsfield as part of your stack.

The Sora Situation

One more thing worth mentioning: OpenAI quietly shut down the Sora standalone app this month.

One More: Adobe Firefly

Adobe Firefly expanded this month and added Kling directly into its model lineup. They’re now at 30+ models including Google, OpenAI, and Runway alongside Kling. They also launched Quick Cut, turns raw footage into a structured first cut automatically.

Midjourney V8 Is Here: And It’s Fast

Midjourney dropped V8 Alpha on March 17th, and the headline number is speed: 4 to 5 times faster than V6. Faster iterations mean you can actually test more concepts in a session instead of waiting on generations.

Suno 5.5 Dropped: And It Gets Personal

Suno released 5.5 on March 26th, and this one adds three features worth paying attention to if you use it for background music or score work.

All right, that’s Issue #23.

A lot moved this week. Motion Control is real and worth testing. Cinema Studio 2.5 is a meaningful update if Higgsfield is in your stack. Midjourney V8 is worth keeping an eye on even in alpha. Suno 5.5 is a bigger shift than the version number suggests. And the broader market is settling into a shape that makes tool selection less overwhelming, you don’t need everything, you need the right combination for what you’re building.

One more thing, I’ve been busy behind the scenes. I’m building out some new courses and guides that I think are going to be genuinely useful for where this space is heading. Nothing to announce just yet, but they’re coming soon. Stay tuned.

I’ll catch you in the next one.

- Khalil

Stop Chasing Docs. Automate Them.

Docs piling up faster than you can write them? Same.

Every team knows the feeling — product ships, docs don't. Changelogs get forgotten. Style violations quietly accumulate. Broken links go unnoticed for months.

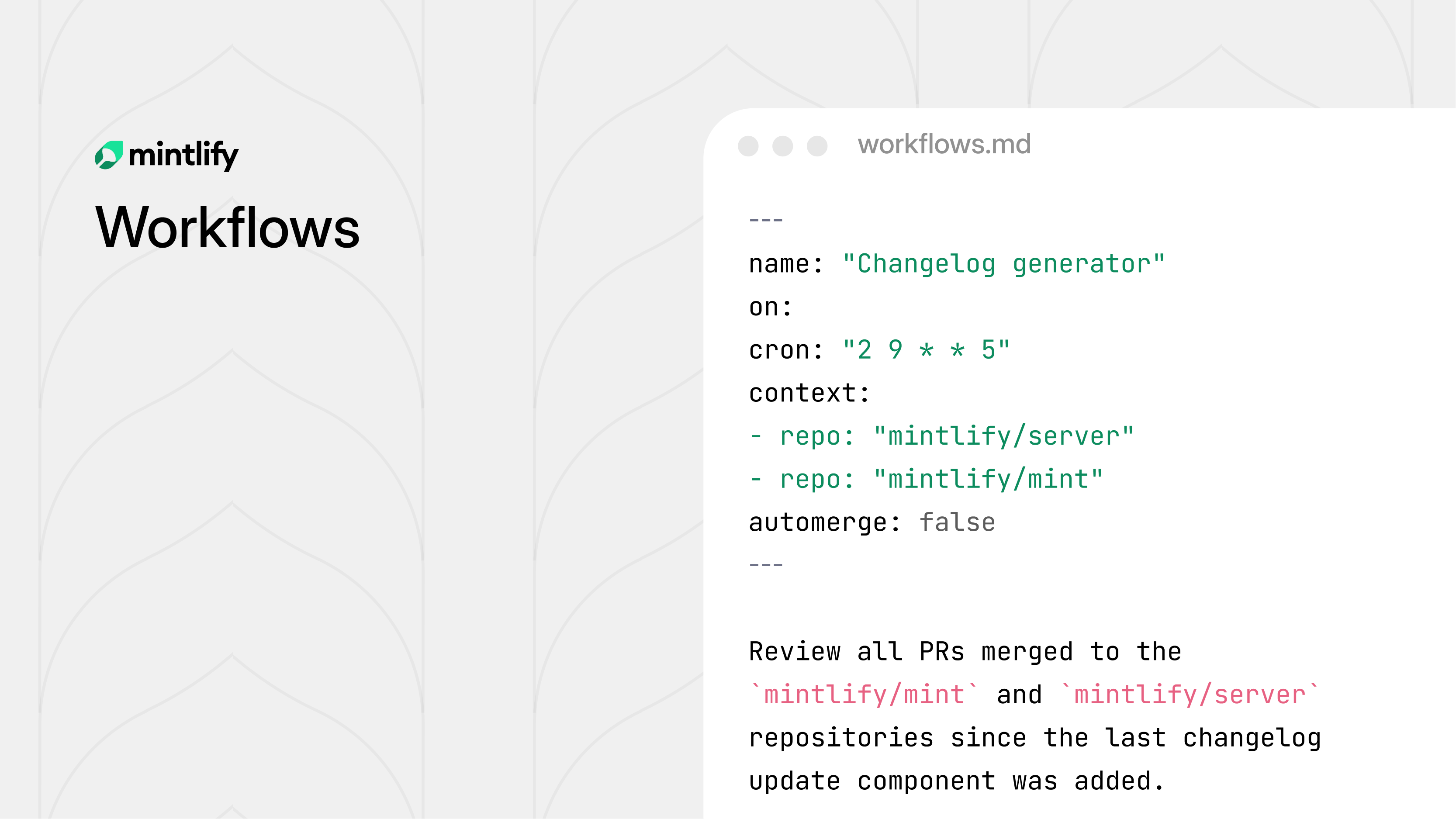

Mintlify's new Workflows feature fixes this. Define automation rules, and the agent handles the recurring maintenance work for you — on your schedule, by your rules.

Draft docs when a PR merges. Generate changelogs every Friday. Run a style audit on every push. Flag translation lag before it becomes a problem. Each workflow is version controlled, fully configurable, and fits into your existing review process.

You decide when it runs, what it checks, and whether changes get committed directly or opened as a pull request for review.

The result: documentation that actually keeps up with your product, without someone manually chasing it down.