AI for Real Life

Issue #22 — Have We Finally Beaten the Uncanny Valley?

Hey, it’s Khalil.

For a long time, AI dialogue has been the final boss of AI filmmaking.

Wide shots were easy. Stylized montage worked. Even dramatic lighting felt manageable.

But the moment you cut to a tight close-up and someone starts talking, your brain makes a decision instantly.

Believe it or don’t.

That’s where the uncanny valley lives.

This week I stress-tested Kling 3.0 Omni inside a real project. Not a demo. A real dialogue scene from The Life of the Lazy Mon. Two characters. One bar. Multi-angle coverage. Subtle bro-comedy energy.

I gave myself one rule.

No ElevenLabs.

No external lip sync.

No patchwork fixes.

Just Omni.

I needed to know if it could actually carry performance on its own.

The Workflow Shift

The biggest breakthrough wasn’t better prompting. It was how I built the scene.

Instead of writing one massive cinematic paragraph and hoping for consistency, I built foundations first. Character elements. Scene elements. Props. Voice binding.

When you create a character element in Omni, you’re building an identity anchor. Multiple angles. Stable lighting. Face consistency. You’re teaching the model who this person is before asking them to act.

Same with the bar location. Lock it in first. Don’t hope the model remembers layout later.

It’s pre-production inside the model.

Skip it, and you burn credits fixing drift.

The real shift happened with coverage.

I stopped treating Kling like a clip generator and started treating it like a camera. I generated a clean two-shot, pulled a usable frame, re-anchored it, then built reverse angles and over-the-shoulders from there.

Instead of chasing a perfect multi-shot dialogue in one pass, I stacked the scene like blocks.

It felt closer to directing than prompting.

Not perfect. Some generations still fail. At one point a random shirtless guy walked through the bar for no reason.

But when it works, it’s clean. Consistent lighting. Stable layout. Minimal cleanup.

That’s new.

And the control layer matters.

With most models, you avoid names. With Omni, you lean into them. Build the elements first. Then write normally. Then tag.

That shift changes everything.

Performance Strategy

Dialogue still breaks if you push too far.

Instead of chasing 15-second perfection, I focused on 6 to 8 second acting beats.

Close-up line delivery.

Reaction shot.

Micro-expression.

When I kept the beats tight, I started seeing something different. Subtle smirks. Natural blinks. Slight head tilts that felt intentional.

For the first time, I caught myself forgetting I was watching AI.

Just for a second.

That pause matters.

It’s not perfect. But it’s close enough that I’m rethinking when I automatically reach for external lip sync tools.

Cost Reality

Most of my testing has been at 720p.

I know.

But the logic is simple. Generate cheaper. Find the keepers. Upscale the finals in Topaz.

It becomes a math problem.

Burn credits upfront, or refine strategically?

The bigger shift isn’t resolution.

It’s speed plus control.

This scene would have taken weeks with older workflows. This took a couple of days.

That changes what feels possible.

🚀 This Week’s AI Production Notes

While I was head-down in the bar scene, the rest of the AI world kept moving.

Here’s what matters for your workflows this week.

Kling 3.0 Omni in ComfyUI

Node-based creators can now access multi-shot and element-locking features through Partner Nodes, including native audio generation.

The Audio-First Shift

Lightricks partnered with ElevenLabs to launch audio-to-video generation in LTX-2. Instead of adding sound after the fact, audio now drives motion and performance from frame one. For dialogue-heavy creators, that’s a major shift.

HeyGen “Video Agent” Update

A redesigned script panel and a 15-second Instant Avatar creator just dropped. The new Video Agent allows editable motion graphics, which means client revisions no longer require full re-renders.

Google Veo 3.1 + Nano Banana Pro

Google tightened integration between Gemini image generation and Veo 3.1. You can now generate “ingredient” images and feed them directly into Veo with stronger identity consistency.

🕊 The Seedance 2.0 Moment

Seedance 2.0 officially launched on February 12th (but as of February 23rd still not available on major platforms in the US), and it’s become the quiet storm in the industry.

Built on a unified multimodal architecture, it doesn’t just layer sound on top. It understands audio and scene physics together.

The viral clips handling complex human motion are forcing people to rethink what realistic AI physics even means.

There’s legal pushback brewing around training data.

But the tech itself feels like a glimpse of something different.

A world where we don’t need as many workarounds.

I’m hoping to get hands-on time with it soon.

So… Did We Beat the Uncanny Valley?

Not completely.

But we’re closer than we’ve ever been.

We’re no longer asking, “Can it generate something?”

We’re asking, “Can it hold up under pressure?”

Close-ups. Dialogue. Sustained performance.

When I watch AI dialogue now, my brain hesitates just a little longer before rejecting it.

That hesitation might be the real milestone.

Stay building,

Khalil

Find the guides HERE

Better prompts. Better AI output.

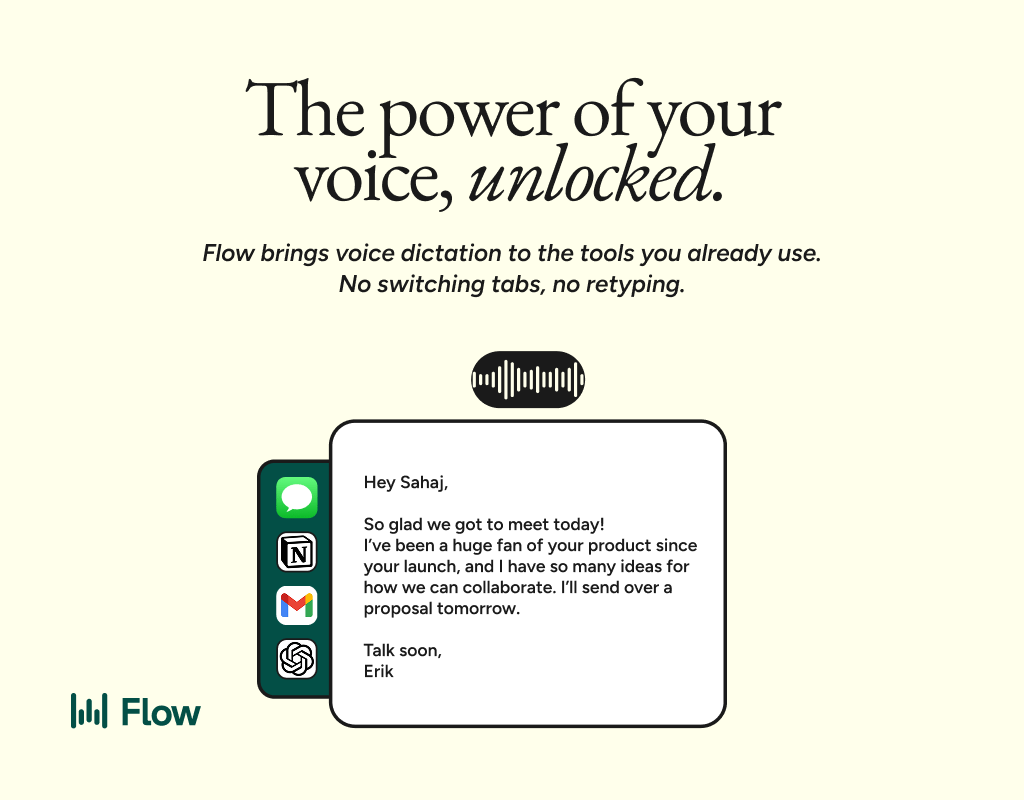

AI gets smarter when your input is complete. Wispr Flow helps you think out loud and capture full context by voice, then turns that speech into a clean, structured prompt you can paste into ChatGPT, Claude, or any assistant. No more chopping up thoughts into typed paragraphs. Preserve constraints, examples, edge cases, and tone by speaking them once. The result is faster iteration, more precise outputs, and less time re-prompting. Try Wispr Flow for AI or see a 30-second demo.